Why the NHTSA Data on Tesla Autopilot Crashes Is Misleading

Tesla has often made the news for all the wrong reasons, thanks often to crashes involving its Autopilot system. Though the advanced driver assistance system’s name implies Autopilot is fully autonomous, that’s not the case, and crashes can result when too much is expected of it. But is the data surrounding Tesla Autopilot crashes really as negative as some would believe? There are those who argue that the National Highway Traffic Safety Administration’s numbers are misleading.

So let’s take a closer look at Tesla Autopilot crash data the NHTSA has compiled so far.

Critics have slammed Tesla’s Autopilot system

Tesla Autopilot is notorious for not actually being fully autonomous despite the name, and the results can be disastrous. Jalopnik has explained some of the many ways in which overreliance on Tesla’s Autopilot system has had negative consequences for drivers.

First, we should note that drivers who activate Tesla Autopilot are still expected to be aware of what their car is doing and to keep their hands on the wheel at all times. Nevertheless, some drivers fail to follow these protocols. In such instances, crashes can result. For example, the NHSTA has investigated more than 750 complaints about problems with Autopilot’s ability to detect metal bridges, s-shaped curves, oncoming and cross-traffic, and various sizes of vehicles, including large trucks.

Concrete barriers have also proven difficult for Autopilot to detect, which has resulted in deadly crashes. In addition, phantom braking has been a repeated problem.

All in all, Tesla’s Autopilot has been earning an increasingly bad reputation for its supposed inability to live up to its name.

Preliminary NHTSA data on Tesla Autopilot crashes is misleading

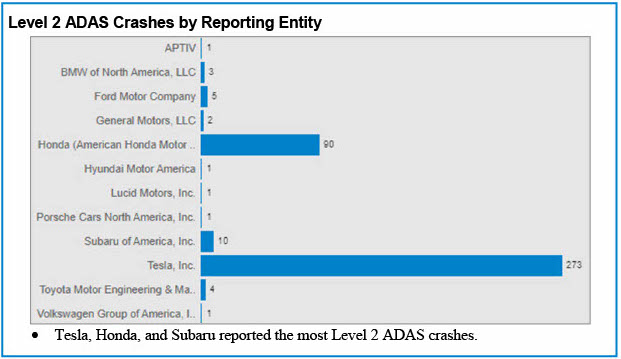

Anyone who glances at a recently released NHTSA report regarding crashes involving Level 2 advanced driver assistance systems (ADAS) will likely find Tesla’s numbers outrageous. There’s a huge difference between the number of crashes involving Tesla Autopilot and those of the nearest competitor, Honda.

More specifically, the NHTSA shows 273 crashes involving Tesla’s self-driving feature between July 20, 2021, and May 15, 2022, according to Consumer Reports. The next-most-reported manufacturer on the list, Honda, had only 90 crashes reported.

But it’s crucial to keep a few caveats in mind when considering these numbers. Most important is the fact that most of the incidents on the NHTSA’s list were reported automatically by the vehicle through the internet. Not all cars have this capability, so their involvement in crashes is much likelier to go unreported.

It’s also necessary to consider the number of vehicles on the road with Level 2 ADAS capabilities. Manufacturers such as Tesla, which sell more vehicles with these features, will end up more predominantly featured in reports such as the NHTSA’s.

Even the NHTSA admits, “The design of the report is not to look at any one company or to compare companies.”

Why is the NHTSA compiling this data?

The NHTSA report and others going forward “will be used to help guide research, rulemaking, and enforcement” of all advanced driver assistance systems, not just Tesla Autopilot, Consumer Reports explains. “Enforcement actions can and have been taken,” Anne Collins, the NHTSA’s associate administrator for enforcement, says.

As for the NHTSA’s wider role, this U.S. organization makes it its mission to “save lives, prevent injuries, and reduce economic costs due to road traffic crashes, through education, research, safety standards, and enforcement activity.”

Much of the NHTSA’s work involves performing crash tests on vehicles to help keep the public as safe as possible. These crash tests include simulations of head-on collisions, side-impact crashes, and rollovers. The NHTSA also works to identify ongoing safety problems with vehicles and issues recalls as necessary to ensure that manufacturers address those problems.

Thanks to the NHSTA’s work, drivers and passengers can be assured they are far safer in vehicles than they would have been decades ago.