Watch: Self-Driving Tesla Causes an Eight-Car Pile Up On SF Bay Bridge

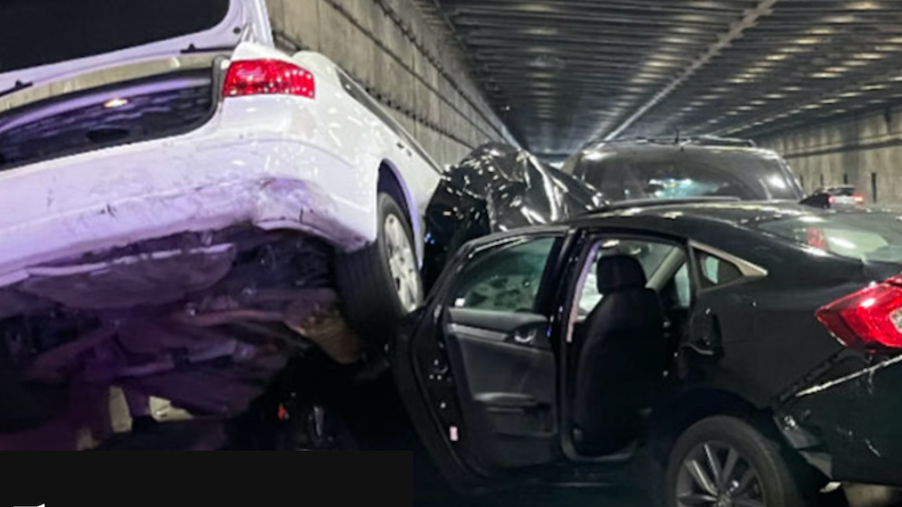

On Thanksgiving Day, everything was running smoothly on the San Francisco Bay Bridge. That changed rather quickly when a Tesla Model S changed lanes, then came to a stop in the fast lane. Because it was so abrupt, drivers behind it had little time to react, ultimately causing an eight-car pile-up. Reports said nine injuries occurred, including children. Was Tesla self-driving to blame?

Was the Tesla on self-driving autopilot?

The Intercept was able to get images through the California Public Records Act, which shows the result of the incident. The elephant in the room is if Tesla Autopilot caused the series of maneuvers. Yes, it was, according to the owner.

The driver told police that the left turn signal came on, and then the brakes activated as it moved into the left lane. It then slowed down “to a stop directly in the path of travel.” A number of videos from the bridge confirm what the driver experienced.

Was the Tesla accident a case of self-driving and phantom braking?

Ironically, Tesla CEO Elon Musk earlier that day announced the “Full Self-Driving” upgrade is available immediately. He called it a “major milestone” with over 285,000 cars already driving with the technology. As per usual, the National Highway Traffic Safety Administration added this incident to its growing list of similar self-driving accidents it is investigating.

The NHTSA says that it is investigating 273 “Autopilot” crashes occurring between July 2021 and June 2022. According to the administration, a majority of deaths occurring in the 273 incidents involved Tesla vehicles. Of the 35 accidents it has looked into so far, 19 people perished.

Recently, “phantom braking” incidents during self-driving mode have substantially increased. According to the Washington Post, over 100 complaints were received by the NHTSA in only three months. Google’s Waymo, which is also developing autonomous driving technology, announced it wasn’t using the “self-driving” term anymore.

How are the feds overseeing companies like Tesla?

“Unfortunately, we see that some automakers use the term ‘self-driving’ in an inaccurate way, giving consumers and the general public a false impression of the capabilities of driver-assist technology,” Waymo said in a statement. “That false impression can lead someone to unknowingly take risks that could jeopardize not only their own safety but the safety of people around them.”

It has gotten to the point that the Transportation Department has felt the need to issue warnings about the term. “I keep saying this until I’m blue in the face, anything on the market today that you can buy is a driver assistance technology, not a driver replacement technology,” Secretary of Transportation Pete Buttigieg said. “I don’t care what it’s called. We need to make sure that we’re crystal clear about that, even if companies are not.”

We keep hearing about these self-driving incidents. But there doesn’t seem to be any federal enforcement to combat what manufacturers claim versus the increasing incidents of crashes and injuries. For its part, Tesla’s Musk has tweeted that there is an update of the system coming this month.

Whether it addresses the problems Tesla drivers are experiencing, we’ll have to wait and see. But the vision of self-driving cars by 2025 seems further away than it has ever been, with some companies abandoning the development of the technology.